In February 2024, Chien-Yao Wang, I-Hau Yeh, and Hong-Yuan Mark Liao, introduced YOLOv9, a computer vision model architecture that outperforms existing YOLO models, including YOLOv7 and YOLOv8.

This page describes the data format you will need to use to train a YOLOv9 model. Note: YOLOv9 uses the same format as YOLOv7.

Roboflow supports converting 30+ different object detection annotation formats into the TXT format that YOLOv7 needs and we automatically generate your YAML config file for you. Plus, all 90,000+ datasets available on Roboflow Universe are available in YOLOv7 format for seamless use in custom training.

With Roboflow, you can deploy a computer vision model without having to build your own infrastructure.

Below, we show how to convert data to and from

YOLOv9 PyTorch TXT

. We also list popular models that use the

YOLOv9 PyTorch TXT

data format. Our conversion tools are free to use.

Free data conversion

SOC II Type 2 Compliant

Trusted by 250,000+ developers

Free data conversion

SOC II Type 1 Compliant

Trusted by 250,000+ developers

The

models all use the

data format.

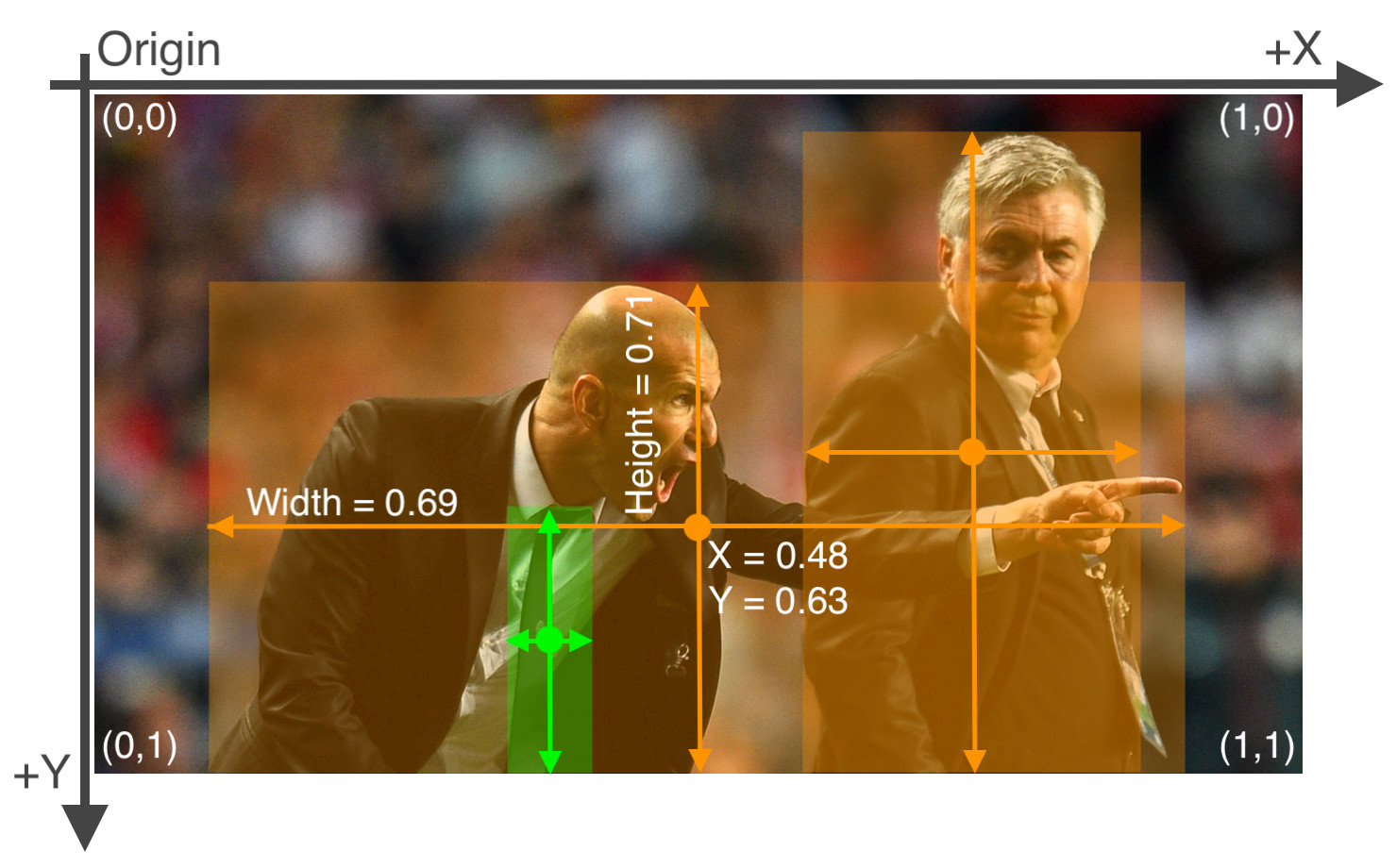

Each image has one txt file with a single line for each bounding box. The format of each row is

class_id center_x center_y width height

where fields are space delimited, and the coordinates are normalized from zero to one.

Note: To convert to normalized xywh from pixel values, divide x (and width) by the image's width and divide y (and height) by the image's height.

1 0.617 0.3594420600858369 0.114 0.17381974248927037

1 0.094 0.38626609442060084 0.156 0.23605150214592274

1 0.295 0.3959227467811159 0.13 0.19527896995708155

1 0.785 0.398068669527897 0.07 0.14377682403433475

1 0.886 0.40879828326180256 0.124 0.18240343347639484

1 0.723 0.398068669527897 0.102 0.1609442060085837

1 0.541 0.35085836909871243 0.094 0.16952789699570817

1 0.428 0.4334763948497854 0.068 0.1072961373390558

1 0.375 0.40236051502145925 0.054 0.1351931330472103

1 0.976 0.3927038626609442 0.044 0.17167381974248927The `data.yaml` file contains configuration values used by the model to locate images and map class names to class_id's.

train: ../train/images

val: ../valid/images

nc: 3

names: ['head', 'helmet', 'person']